Veeam’s Backup Copy Job can provide more than its name suggests. The primary task of Backup Copy Job is to copy existing backup data to another disk system in order to restore it later or even to send backup data to an offsite location (see the 3-2-1 Rule).

Backup Copy process

When initiated, a Backup Copy Job runs permanently in the background and has the following logic:

- Performs a check-up at a specific time point, to see if there is any new backup data available

- If there’s no new data, the job waits for any new backup data

- When the new backup is discovered, the job copies it

- If all data cannot be transferred within the defined time window, the job continues attempts on the data transfer until all data is saved to a target location.

Configuration steps

The first step in the configuration defines a time window and a point in time at which Backup Copy Job checks for new available backup data:

In Figure 1, an interval is set to one day, and the time of a check-up for new available backup data is set to 01:00. If there is new data available since 01:00 of the previous day in the backup repository, Backup Copy Job starts transferring the data immediately. If not, it keeps waiting. This mechanism significantly simplifies the scheduling, as it allows the Backup Job to have more time for data transfer if needed.

However, in some situations the Backup Copy Job has to wait for a specific point in time when it can transfer the new backup data. For example:

- A Backup Job is running, let’s say, every six hours (00:00, 06:00, 12:00, 18:00 [6:00 p.m.])

- Backup Copy Job has to copy data from 18:00.

Due to the fact that no one can predict when the 18:00 backup process will be completed, it is possible to set the time of Backup Copy Job on “much later”, for example 19:00 (7:00 p.m.). Alternatively, starting from version 8, there is a possibility to instruct Backup Copy Job to wait until the new backup data is recorded:

BackupCopyLookForward HKEY_LOCAL_MACHINE\SOFTWARE\Veeam\Veeam Backup and Replication\

Using this command, you can set the time exactly to 18:00 and the Backup Copy Job will wait until the 18:00 Backup Job is completed.

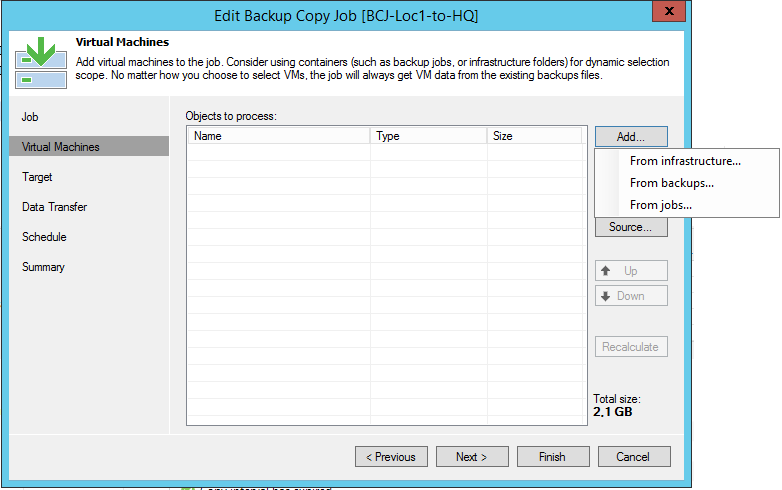

The next step is to choose what will serve as a source for the Backup Copy Job:

There are following choices:

- From infrastructure: You can select either virtual machines, or VM containers; the Backup Copy Job searches automatically for the most recent backup

- From backups: You can select specific VMs from backups or entire backups with multiple VMs

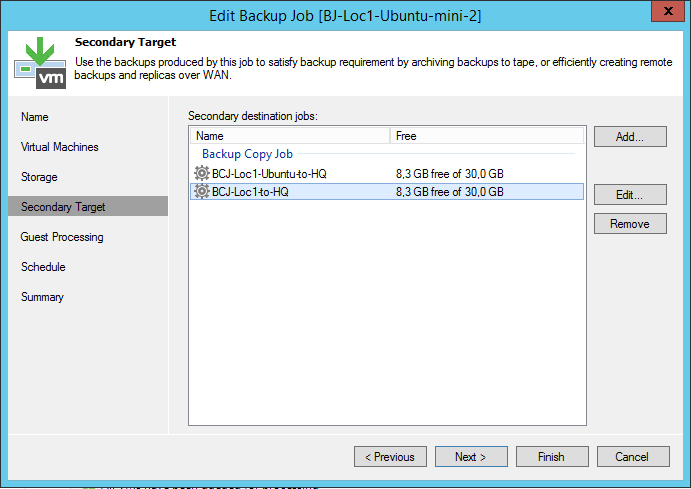

- From jobs: You can select existing backup jobs; the original backup job is automatically added to the Secondary Target tab:

Figure 3. Secondary target in the backup job configuration

A Backup Copy Job can have multiple sources. If a VM is available from multiple sources, the job transfers only its latest version. A Backup Job can also have multiple secondary targets (see Figure 3).

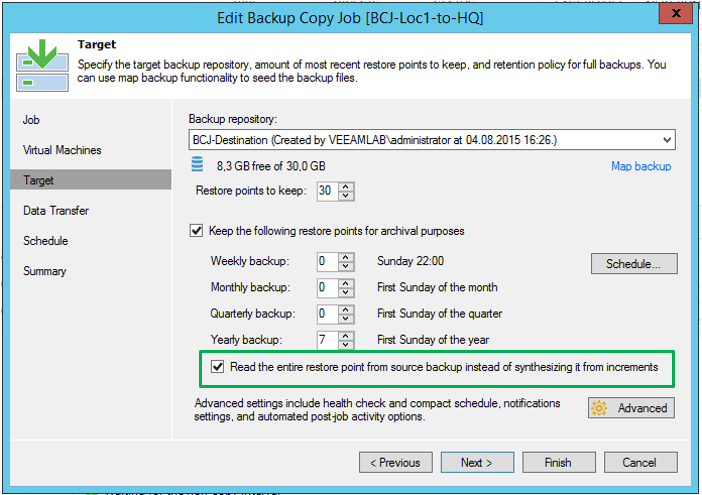

After selecting the source(s), it is necessary to define a target – a Veeam Repository. Figure 4 shows the possible settings:

Figure 4. Specifying the target

The Backup Copy Job always creates at least a Forward Incremental Forever backup chain, the length of which is defined by the option Restore points to keep. Additionally, there is an option to insert full backup points to the chain for specific days – Keep the following restore points for archival purposes. This corresponds to the traditional Grandfather-Father-Son principle (GFS):

Restore points are saved as a full backup. Note: The full backup will not be generated at the configured day (for example, the first Sunday of the month)! A check-up will be performed first and a full backup will be created only if the check-up reveals new data to be backed up.

The Backup Copy Job works as “incremental forever” and the full backup is generated in a target location. This is practical especially when data must be transferred via low bandwidth.

Space consumption at GFS

In the example above (Figure 5), there would be many full backups generated. Even if a backup can be performed weekly and monthly at the same time, the required space in this case would be enormous.

To reduce the required space, there are several options:

- Weekly restore points can usually be replaced by 30 “Restore points to keep”, as 30 incremental backups normally take less space than four full backups.

- Usage of a deduplication appliance or Windows Server 2016 ReFS integration or deduplication (limited scalability with Windows 2012).

- Usage of the second Backup Copy Job every 30 days in order to remove the monthly/ quarterly backup frequency.

The third option is not quite obvious, so here you have an explanation to follow:

- Set up the option “Copy every” to 30 days (see Figure 1: Job definition)

- Adjust option “Restore points to keep” to 12

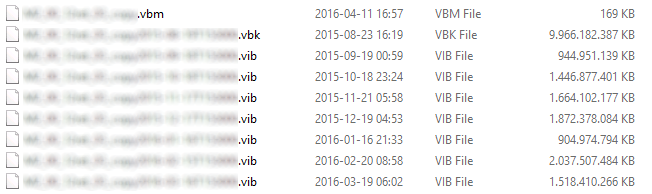

This results in one full backup and eleven incremental instances as monthly backups. In this case, it does not matter how many restore points there were in the original Backup Job. The Backup Copy Job is not a primitive copy operation tool, but it checks blocks in which data has changed within a defined period. An example of the space savings compared to the GFS scheme is shown in Figure 6.

Figure 6. Example of monthly incremental instances

Listed here is a file server with a full backup (.vbk file) size of almost 10 TB. The change rate per month is about 1-2 TB (the size of .vib files). Therefore, space savings per month is about 8-9 TB, which is quite significant in a twelve months period. Note: Yearly backups would be configured as shown in Figure 8 with the GFS daily Backup Copy Job, since the maximum copy interval is 100 days.

Obviously, a disadvantage of this option should not be ignored: If there is a damage on the backup chain (bit error, unintentional deletion of data), some of the monthly backups can become unusable.

Therefore, this option should be ideally used in combination with tape backups (see Figure 7).

Figure 7. The Backup Copy Job and Tape

The same statement is valid for deduplication appliances or Windows deduplication: If the deduplication database is damaged, the whole backup chain is at risk.

In Veeam Backup & Replication v9, there’s a new option: Read the Entire restore point from source backup instead of synthesizing it from increments (see Figure 8). This option is the exact opposite of the previously described “The Backup Copy Job is always incremental”. It is intended for systems that have a very poor performance in synthetic I/O operations. This applies mainly for deduplication appliances without Veeam integration and small disk systems (two to eight hard drives) in SMB environments. In this case, a sufficient bandwidth needs to be ensured as the data is transferred from scratch between the primary and secondary backup.

Figure 8. Transfer new Restore Point

The second to last step (Figure 9) offers to use WAN Acceleration.

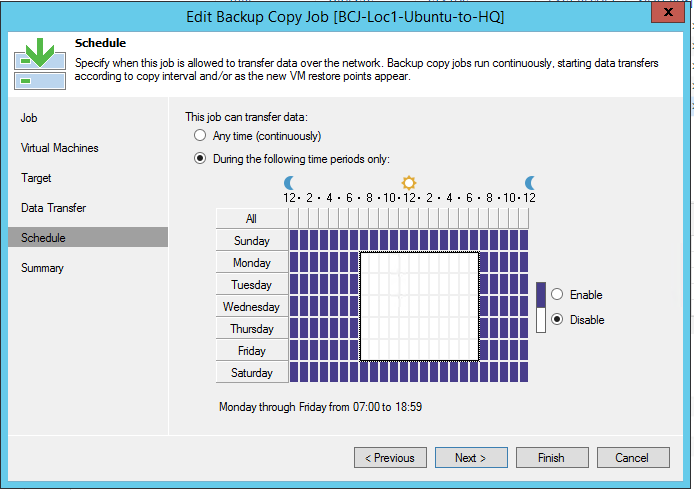

The last configuration step is an option to limit Backup Copy Job’s activity for specific times. Figure 10 shows that no data is being transferred during working hours on weekdays. The bandwidth restrictions can be set up in the global “Network Traffic” settings.

Figure 10. Schedule Restrictions

Advanced settings

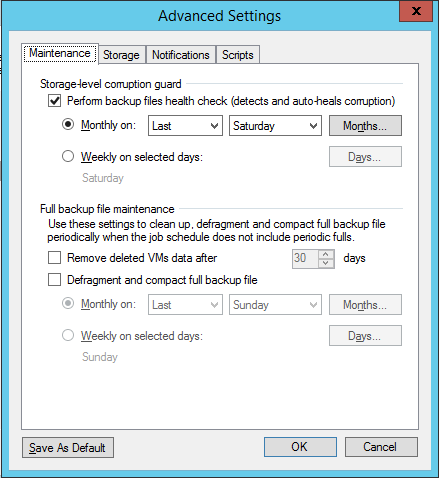

There are some interesting advanced settings in the Backup Copy Target menu (see Figure 4). The maintenance settings are shown in Figure 11:

By default, for every Backup Copy Job Veeam performs an integrity check-up once per month, on the last Saturday of the month. Its purpose is to find errors caused by the storage systems (Silent Data Corruption). Veeam reads all data blocks of the backup and checks if the checksums are correct. If you have multiple Backup Copy Jobs, it is recommended to distribute the integrity checks to several days due to the full reading of I/O data load generated on the storage system.

There is an option to defragment the full backup regularly which can lead to long term (low) performance improvements and space savings.

Summary

The Backup Copy Job mechanism is much more flexible than its name would suggest. It is a robust tool that simplifies compliance with the 3-2-1 Rule.