Hyper Converged Infrastructure (HCI) is starting to disrupt traditional storage markets (SAN, NAS + DAS) as enterprise IT begins to replicate Hyperscale public cloud provider’s infrastructure. Public cloud giants such as AWS and Microsoft Azure have developed their own scale-out, software-defined enterprise storage built on commodity servers and disks. Adding the enterprise features from existing SAN and NAS devices into software allows the use of commodity hardware while shifting the storage directly to the host to improve performance and scalability. The storage is provided directly by the host. The storage is scaled-out, as are the compute resources, providing a truly scalable and cost-effective solution.

A report from 2016 shows the predicted change from traditional storage to HCI and Hyperscale technologies for the next 10 years.

Even though different vendors provide HCI solutions, but I am not here to compare features between them. VMware’s HCI offering is VMware vSphere combined with their Software Defined Storage (SDS) solution — VMware vSAN. I will cover vSAN and Veeam’s recent announcement to provide further support.

VMware vSAN: What is it and what challenges does it overcome?

With traditional storage, customers faced several challenges such as the hardware was not commodity and it often created storage silos that lacked granular control. Deploying traditional storage could be time consuming because it often included multiple teams and lacked automation.

By moving the storage into software, vSAN provides a linear scalable solution using the same management and monitoring tools VMware admins are already using, while also providing a modern, policy-based and automated solution.

What does the VMware vSAN architecture look like?

vSAN is an object-based file system where VMs and snapshots are broken down into objects and each object has multiple components. vSAN objects are:

- VM Home Namespace (VMX, NVRAM)

- VM Swap (Virtual Memory Swap)

- Virtual Disk (VMDK)

- Snapshot Delta Disk

- Snapshot Memory Delta

Other objects can exist such as vSAN performance service database or VMDKs that belong to iSCSI targets. An object can be made up of one or more components, depending on different factors such as the size of the object and the storage policy assigned to the object. A storage policy defines such factors as Failure to Tolerate and stripe size. For an object to have a failure tolerance of RAID 1 would mean two full copies of the data is distributed across two hosts with a third witness component on a third host, resulting in tolerance for a full host failure. Rack awareness and multiple-site fault domains can also be configured, which can dictate how the objects are distributed.

vSAN uses the concept of disk groups to pool together flash devices and magnetic disks as single management constructs. A disk group is comprised of one flash device for the read cache/write buffer and up to seven capacity devices that can be magnetic disks (Hybrid mode) or flash (All-Flash mode). A disk group must have a cache tier with a capacity tier, and a host can have up to five total disk groups.

Any supported hardware can be used for vSAN. VMware has extensive HCL available, but it also provides vSAN-ready nodes with a multitude of server hardware vendors that come pre-built with all supported hardware.

Other HCI providers require a virtual appliance to run on the host for offloading to the storage. This typically requires reserved CPU and memory from each host in the cluster. vSAN is directly embedded into the vSphere hypervisor kernel by deployment of the vSphere Installation Bundle (VIB). vSAN does still require resources, typically up to 10% of the hosts compute, but this doesn’t compete with other VMs for resources. Because it is integrated with vSphere, the admin uses the same tools to manage that are used for vSphere, and vSAN has full support for native vMotion and DRS.

Standard and 2-Node Cluster deployment methods are supported with vSAN Standard licenses, with Stretched Cluster deployment enabled through the vSAN Enterprise license. Because the vSAN is all in the software, these deployments can be scaled as required.

A Stretched vSAN Cluster configuration enables high Availability across datacenters with zero RPO and by leveraging storage policies redundancy that can be specified locally within a site or also redundancy across sites on a per-VM basis.

Advanced features can be enabled to make efficient use of the storage, features such as deduplication, compression and erasure coding. Deduplication and compression can be simply enabled from a drop-down box on an All-Flash configuration. Savings here will vary depending on the type of data, but it is reported to be as much as 7x savings. Deduplication and compression is a single cluster-wide setting to enable and disable. Deduplication occurs when the data is de-staged from the cache tier to the capacity tier and is limited to the disk group. Compression is applied after deduplication just before the data is written to the capacity tier.

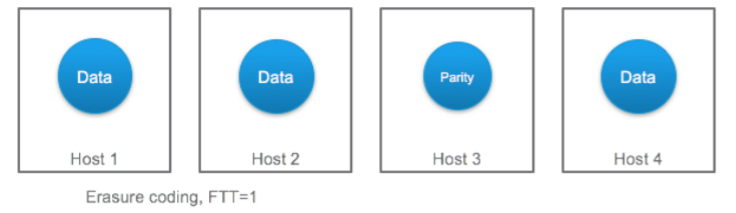

Erasure coding is another feature available for All-Flash configurations that provides the same level of redundancy as RAID 1. It reduces the capacity requirements by taking the data and breaking it into multiple pieces, spreading it across multiple nodes and adding parity data so it can be recreated in the event one of the pieces is lost. To use erasure coding RAID 5, a minimum of four hosts are required in the cluster. To use erasure coding RAID 6 a minimum of six hosts are required.

VMware vSAN 6.6 features

VMware has been running on a six-month update cycle since 2015, with 6.6 the latest version released. This version brings over 20 new features, with the key ones listed below:

- Native encryption data-at-rest

- Resilient management independent of vCenter

- Stretched clusters with local failure protection

- Simple networking with Unicast

- vSAN easy one-click install

VMware vSAN 6.6 requirements and considerations

To run vSAN 6.6, consider the following requirements:

- Must be running at least vCenter Server 6.5.0 d

- Must be running at least vSphere ESXi 6.5.0 d

- Minimum of two physical hosts with a configured disk group using a witness appliance

- Minimum of three physical hosts for standard vSAN (at least four recommended)

- 10 Gb network for All-Flash/minimum dedicated 1 Gb for Hybrid

- Minimum of one flash device for cache tier and minimum of one capacity device for capacity per host

- Hybrid and All-Flash disk groups cannot be mixed

- Minimum of 32 Gb of RAM per host participating in vSAN

- Consider using vSAN ready nodes and consistent hardware configuration in the vSAN cluster

- Consider deploying vSAN with virtual Distributed Switches (vDS) to your Network IO Control

VMware vSAN 6.6 and Veeam 9.5 — Better together

That’s scalability and the required infrastructure covered, but what about data Availability?

Other HCI vendors include their own backup solutions, which have various levels of features. VMware has a very strong relationship already with existing Availability vendors such as Veeam using the vSphere Storage APIs. Instead of developing another backup solution for vSAN, the customer has the choice to take advantage of existing mature products. Many customers already using Veeam Backup & Replication can also use it with vSAN.

Veeam recently announced that it is certified on vSAN. Interestingly, though, Veeam released support for vSAN back in 2014 with the release of patch 4 for Veeam Backup & Replication 7.0. Veeam vSAN integration allows the Veeam proxy to recognize VMs running on a vSAN datastore, but it also uses smart logic for processing vSAN datastores. Since vSAN is distributed on local storage across multiple hosts, Veeam obtains information about the data distribution from vCenter. If there’s a local proxy to the data, Veeam gives priority to that proxy to reduce network traffic. This is because the data object may not necessarily reside on the compute host since with vSAN there is no data locality. To accomplish this, it is recommended that you have a virtual proxy, per vSAN node.

Veeam can also recognize Storage Policy-Based Management (SPBM) that vSAN uses. By using policy-driven storage, the customer can assign different SLAs for a given workload. The SLA can be based on performance or tolerance to failure acceptance. Veeam recognizes configured SPBMs and automatically backs up and restores these for each virtual disk upon a full VM restore. This means that when a VM is fully restored to its original location, it falls back into compliance-preserving SLAs.

The most recent support for vSAN 6.6 is included in Veeam Backup & Replication 9.5 Update 2. In addition, Veeam is now listed in the vSAN HCL for vSAN Partner Solutions.

Additional resources:

- What’s new in vSphere 6.5 and how Veeam fully supports it

- Veeam Backup & Replication is now certified on vSAN