Recently, I blogged about how easy it is to start VMware VM replication with Veeam so you can achieve the best possible recovery time and recovery point objectives (RTPOs). Today, I want to explain how you can overcome the challenge of a heavy replication load on your network. With the assumption that your available bandwidth is limited, I’d also like to share some ways that Veeam Backup & Replication v8 can reduce VM replication traffic over the WAN.

Replicate onsite or offsite?

Your business goals will dictate the vSphere replication scenario that you’ll need. Onsite replication is a high-availability scenario with replicas stored on the same site. An offsite scenario protects your critical data from any problems at the production site by keeping data copies on the remote site. Technically, the site depends on the location of your source and target hosts. For onsite replication, both your source and target ESXi hosts should be in the same site and connected over the LAN. For offsite replication, one or more target ESXi hosts are deployed off-premises where they are connected to the production source host via the WAN.

How much bandwidth for replication do you need?

The remote replication cycle for a VMware VM will take longer than the backup copy process for the very same VM. This additional overhead comes partly from using VMware data protection APIs (Application Program Interfaces) for creating vSphere replicated VMs. VM replication over the WAN (or slow LAN) may take hours, even when transferring increments only (imagine a huge Exchange server with several gigabytes of daily changes).

You might be asking how much bandwidth is enough for your replication of vSphere VMs. The bandwidth amount depends on multiple factors, including the number of VMs, amount of data, the latency and the available window you need to fit. Below is the basic rule for estimating required connection speed:

Required WAN/LAN, MBs = ([Amount of total daily changes, MB/replication window, hours]/3600) x 8

Please note that this value will actually be too high because it doesn’t consider that Veeam Backup & Replication makes the process faster through the use of a number of traffic-optimization options. This will amount to an overall time savings gained by increment-only processing.

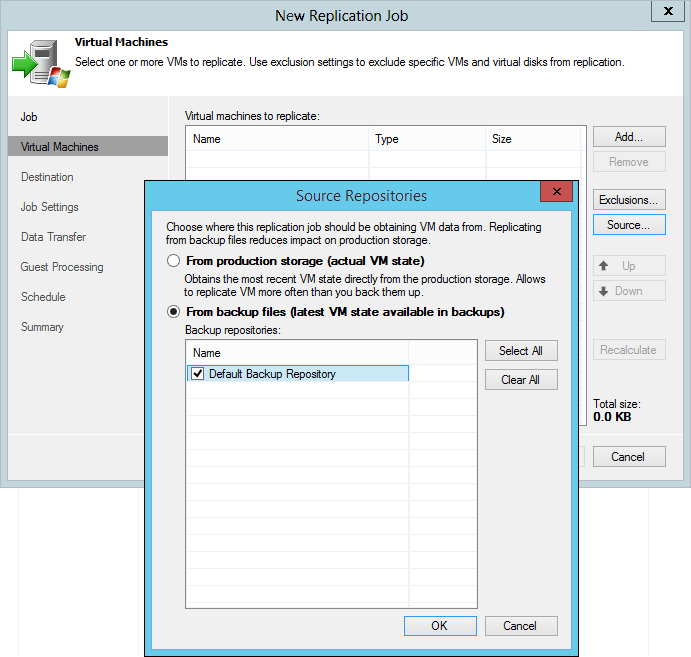

Utilizing backup as a source

Replicas do not and cannot substitute for backups. Replicas are just another way to protect your critical data and get the best possible SLAs if there is a major failure. To maximize protection for tier-1 applications, you should employ both options. Once you already have a VM backup with a chain of increments, why not use it as a source for a remote replica and remove the replication load from production? This is one of the replication enhancements in the latest Veeam Backup & Replication v8.

When creating a remote replica from backup, we like to say: It Just Works! As always with Veeam, this process is automatic. There are no special jobs for the task, except for a regular replication job. The only trick here is that you must specify a backup repository with the required VM backups as a source of data, instead of specifying your live production VM. Veeam Backup & Replication will retrieve all of the data from the backup repository using the latest restore point from the backup chain to build a new replica-restore point, and then offload the replication traffic from your production workloads. This saves a lot of read I/O (including the snapshot process from vStorage APIs for Data Protection (VADP)) on the running virtual machine as well, especially as it usually takes longer to move data for replicated VMs off-site.

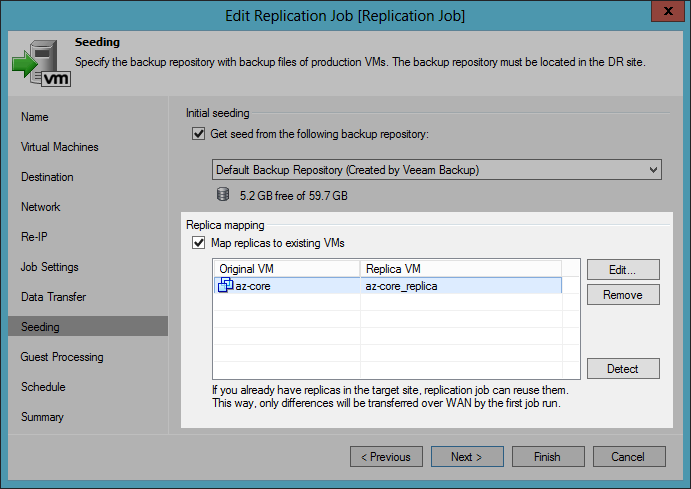

Reducing replication traffic with replica seeding

Replica seeding can help you reduce the initial replication-data flow. Its underlying mechanism is much similar to a remote replica from backup because it also uses VM backup as a replica seed. Replica seeding, however, uses the backup file only during the first run of a replication job. To further build VM-replica-restore points, the replication job addresses the production environment and reads VM data from the source storage.

You need to prepare the seeding backup of the VMware VM by replicating and copying it to your target host. Because the backup copy supports WAN acceleration with global deduplication, data-block verification and traffic compression, the performance speed will be fast! When executing replica seeding, Veeam Backup & Replication will synchronize the restored data states from backup and production VMs and replicate only the changed data.

Optimizing replication traffic with replica mapping

Another way to optimize bandwidth usage is replica mapping, which is when you map a production VM to its existing alternate at the DR (disaster recovery) site. This can come in handy if you need to reconfigure or recreate replication jobs when, for instance, you have relocated an existing replica to a new host or storage. If you are using a shared WAN/LAN connection, I recommend you apply network-throttling rules to prevent replication jobs from consuming the entire bandwidth available in your VMware environment.

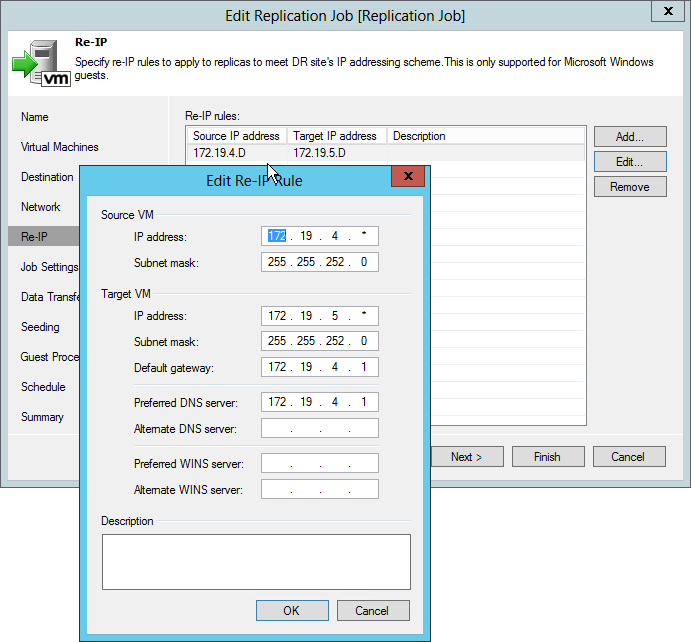

Solving possible configuration issues

Replicating VMware VMs between subnets with different network settings can lead to some configuration problems. Veeam Backup & Replication, by default, maintains the network configuration of both the original VM and its replica. DR and production networks may not necessarily match, but that’s not a problem. Just set your custom rules for network mapping. Another great Veeam Backup & Replication reconfiguration feature is the ability to assign an IP-processing scheme for Windows-based, replicated VMs.

I welcome your comments on this blog. You’re also invited to share the traffic optimization results you achieve using Veeam Backup & Replication for VMware replication.

Follow this Veeam blog for more updates. My next post will focus on more hot topics, including performing replica failover and failback with Veeam!

Additional helpful resources:

- Veeam Community Forums: Replication Traffic

- Recorded webinar: Software-Based WAN Acceleration 101

- Recorded webinar: Better disaster-recovery planning with Veeam replication enhancements