- Veeam Support Knowledge Base

- Deduplication Appliance Best Practices

Deduplication Appliance Best Practices

Cheers for trusting us with the spot in your mailbox!

Now you’re less likely to miss what’s been brewing in our knowledge base with this weekly digest

Oops! Something went wrong.

Please, try again later.

Purpose

This article provides links to vendor-provided best practices documents and vendor-specific configuration advice found in the Veeam Backup & Replication User Guide. It also offers general recommendations for configuring Veeam Backup & Replication jobs and repositories when utilizing deduplication storage.

By default, the Veeam Backup & Replication settings are primarily optimized for non-deduplication storage. These default settings may have performance impacts or limit the effectiveness of deduplication storage. Specifically, the default configuration may not allow deduplication storage to fully leverage its native compression and deduplication capabilities, resulting in suboptimal space-saving benefits.

Solution

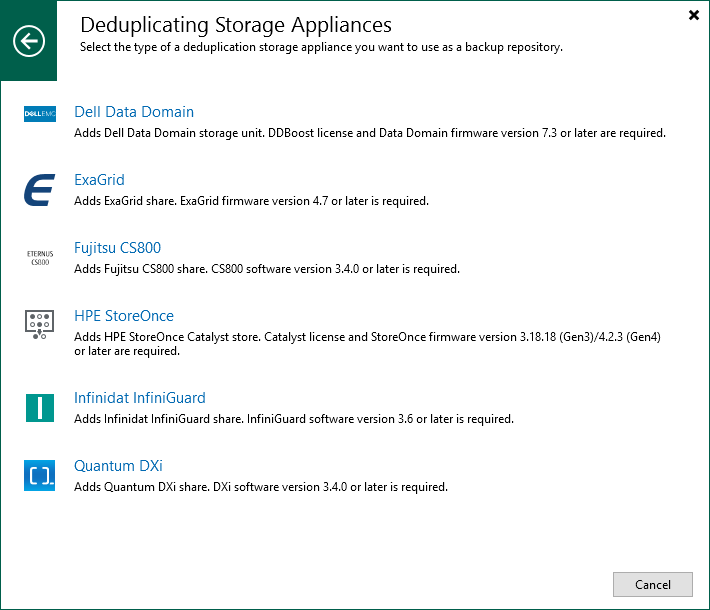

Integrated Deduplication Storage Appliances

The following storage appliances directly integrate with Veeam Backup & Replication. When added as a Backup Repository, the software will configure the default repository and job settings to align with the recommendations for that storage. To learn more, review the product pages below:

Vendor-Provided Best Practice Guides

Vendor-Specific Advice

3-2-1 Rule With Deduplication Storage

Step 1: Create backups on the first site with short-term and long-term retention.

Use a backup target storage system (general-purpose storage system) for short-term primary backups and instruct Veeam to copy the backups to a deduplication storage system for long-term retention.

A variant of this approach is to use a standard server with a battery-backed RAID controller and disks to store the primary backups (cache approach) and use the backup copy to deduplication storage systems for long-term retention.

Step 2: Add offsite target.

In addition to the above scenarios, you can create an offsite copy of your data using the following options.

- Place deduplication storage at the second site. Use the Veeam backup copy job to create the secondary offsite backup from the primary backup.

- Place deduplication storage on both sides.

- Place deduplication storage on the primary side and use object storage or tape on the second site. Use Veeam backup to tape jobs or Veeam scale-out backup repository cloud tier (connection to object storage) to store data offsite.

The scenario above reflects general best practices. Please get in touch with your deduplication storage vendor for further guidance and check the vendor links provided above for additional usable scenarios with the specific storage.

Generic Configuration Advice

Below is general Veeam Backup & Replication configuration advice to be used when backing up to deduplicated storage.

Job-Level Settings

All vendor-documented best practices and vendor-specific advice supersedes the advice below.

The generic advice below may differ from your storage vendor's best practices. It is critical that you follow the best practices provided by the storage vendor when possible.

Backup Job Settings

Advanced Settings

Below are the general recommendations for the Advanced Settings (Storage > Advanced):

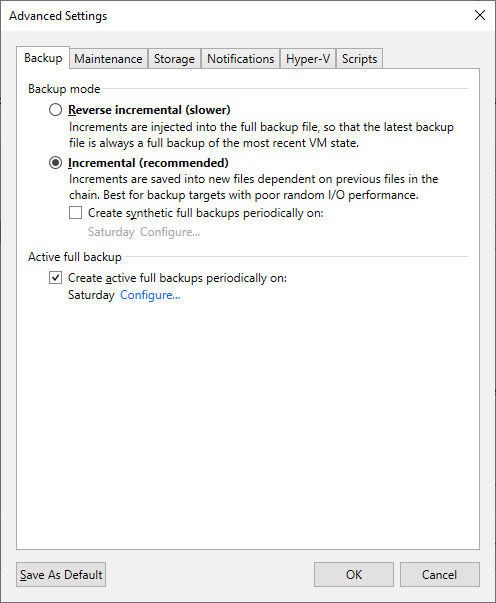

Backup Tab

In general, deduplication devices have the lowest performance during read operations. As such, to maximize performance, the backup jobs should be write-only. Configure the backup job to use (Forward) Incremental with weekly Active full backups to achieve this.

Note: Some Dedupe Appliances that integrate with Veeam Backup & Replication can perform Synthetic Full operations.

- Backup Mode

- (Forward) Incremental

- (Forward) Incremental

- Active full backup

- Enabled and set to weekly.

Weekly Full restore points will ensure that during a restore, as few restore points must be read from as possible.

- Enabled and set to weekly.

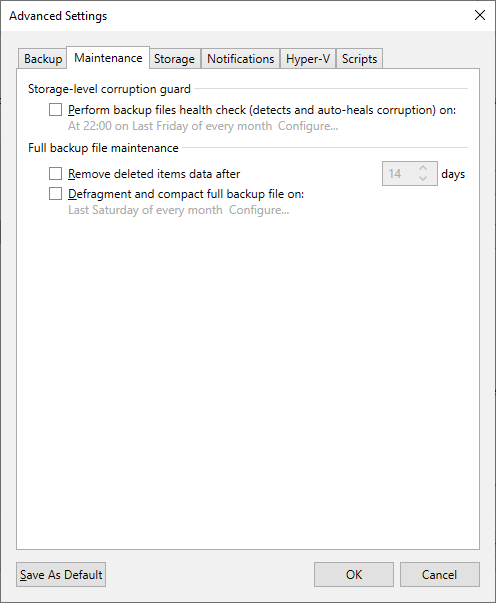

Maintenance Tab

- Perform backup files health check

- Disabled

While enabling this feature with dedupe storage is possible, it requires many read operations, which will take a very long time to complete. With larger backup files on deduplicated storage, this feature could cause a backup job to spend days performing the health check, causing the job to not create new restore points.

- Disabled

- Defragment and compact full backup file

- Disabled

There is no need for this option when Active Fulls are being created.

- Disabled

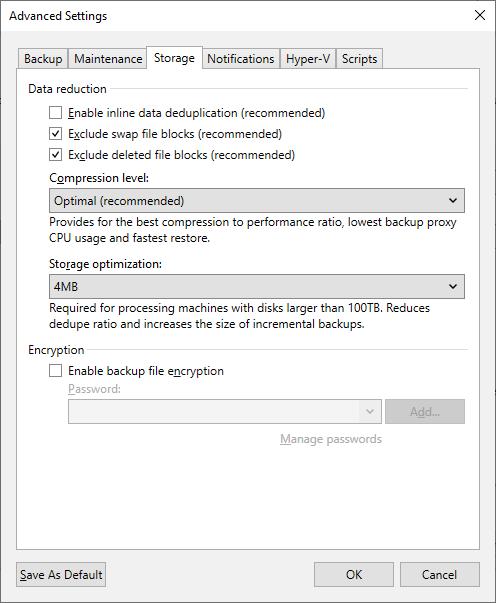

Storage Tab

- Inline data deduplication

- Disabled

Disabling deduplication in Veeam Backup & Replication will cause the restore points to appear larger, but because Veeam does not deduplicate them, it will allow the storage's deduplication function to be more effective.

Note: Storage that utilizes a non-dedupe zone for storing initially written files, such as ExaGrid's Landing Zone, may benefit from having Veeam's deduplication enabled to allow more restore points to be held in that rapid access state before being processed by the storage deduplication system.

- Disabled

- Compression level

- Optimal or Dedupe-friendly

The Repository settings section of this article advises enabling a function that will cause all restore points to be decompressed before being written. As such, the compression set at the job level will only impact data compression while backup data is being transferred across the network. To reduce network congestion, either Optimal or Dedupe-friendly compression may be used. The latter will slightly reduce the effort needed to decompress before writing. Compression at the job level should rarely be completely disabled as it will significantly increase the amount of data that must be transferred over the network to the machine writing the data to the backup repository.

- Optimal or Dedupe-friendly

- Storage optimization (Block Size)

- 4MB

This block size, formerly called "Local target (large blocks)," will help improve performance when storing backup files on deduplication storage as it reduces the size of the backup file's internal metadata table.

Note: For storage that utilizes a non-dedupe zone for storing initially written files, such as ExaGrid's Landing Zone, it is beneficial to set the Block Size to 1MB. A 1MB Block Size will cause Inline Data Deduplication to be more effective, thereby allowing more restore points to be held in that rapid access state before being processed by the storage deduplication system.

- 4MB

- Encryption

- Disabled

Data encryption has a negative effect on the deduplication ratio if you use a deduplicating storage appliance as a target. Veeam Backup & Replication uses different encryption keys for every job session. For this reason, encrypted data blocks sent to the deduplicating storage appliances appear different, even though they may contain duplicate data. If you want to achieve a higher deduplication ratio, you can disable data encryption. If you still want to use encryption, you can enable the encryption feature on the deduplicating storage appliance itself.

- Disabled

All vendor-documented best practices and vendor-specific advice supersedes the advice below.

The generic advice below may differ from your storage vendor's best practices. It is critical that you follow the best practices provided by the storage vendor when possible.

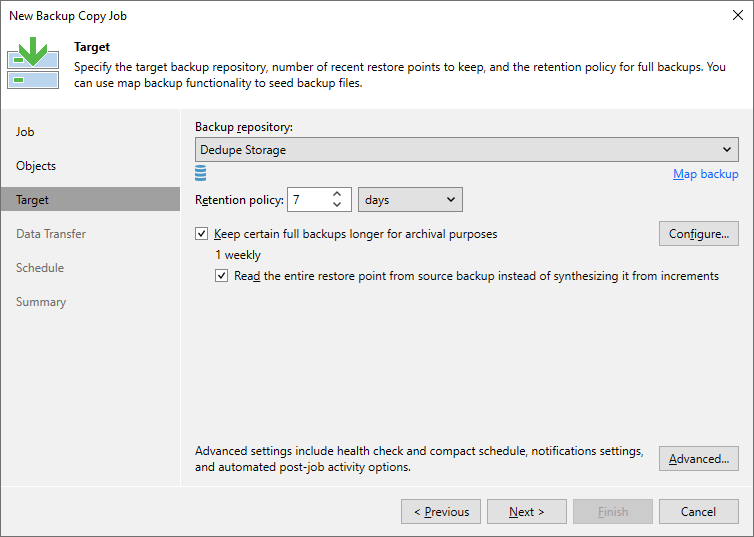

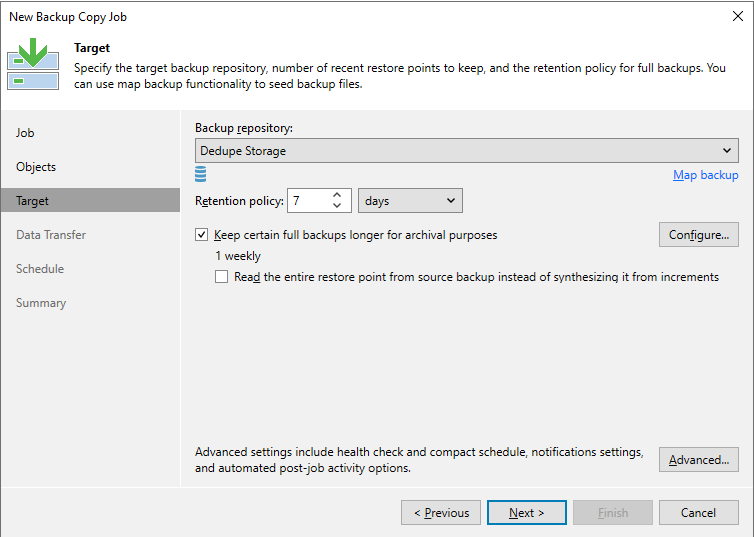

Backup Copy Job Settings

Backup Chain Settings

When targeting deduplicated storage, the backup copy job should be configured as follows to force the backup copy job to perform primarily write-only operations:

- Keep certain fulls backups longer for archival purposes

- Enabled and set to have at least 1 weekly

Enabling this option forces the backup copy job to enforce retention using the forward-incremental retention policy, which prevents the job from using the non-GFS retention method that involves merging the oldest increment into the full (a retention method known as Forever Forward Incremental). Forever Forward Incremental retention method is suboptimal for deduplication storage as it involves many small read and write operations to enforce retention.

- Enabled and set to have at least 1 weekly

- Read the entire restore point from source backup instead of synthesizing it from increments

- Enabled*

Enabling this option for local-to-local backup copy jobs being written to deduplication storage that does not support block cloning will force the job to perform strictly write-only operations to create the GFS full. Thereby avoiding unnecessarily taxing synthetic GFS full creation processing that involves creating the GFS full restore point by reading from previous restore points stored on the dedupe storage.

*This option should be disabled if:- The backup copy job will be transferring backups across a low-speed connection. Specifically, a link so slow that transferring the entire full to the destination would be slower than the job creating a full synthetically using data already present on the dedupe storage.

Some offsite connections may be fast enough to perform the full transfer faster than the synthetic creation process. It will come down to comparing the network throughput vs. the read+write speed of the dedupe storage. For example, if the connection used to reach the backup copy destination is 100Mbps (12.5MB/s), and the dedupe storage can create a synthetic full at 40MB/s, the option should be disabled. However, if the connection is 500Mbps (62.5MB/s), that is faster than the dedupe storage in this example, so reading the entire restore point across the network would be quicker. - The target deduplication appliance backup repository utilizes block cloning functionality.

Block cloning is available with integrated deduplication appliances (HPE StoreOnce Catalyst, Exagrid, Data Domain DDboost, and Quantum DXi)

- The backup copy job will be transferring backups across a low-speed connection. Specifically, a link so slow that transferring the entire full to the destination would be slower than the job creating a full synthetically using data already present on the dedupe storage.

- Enabled*

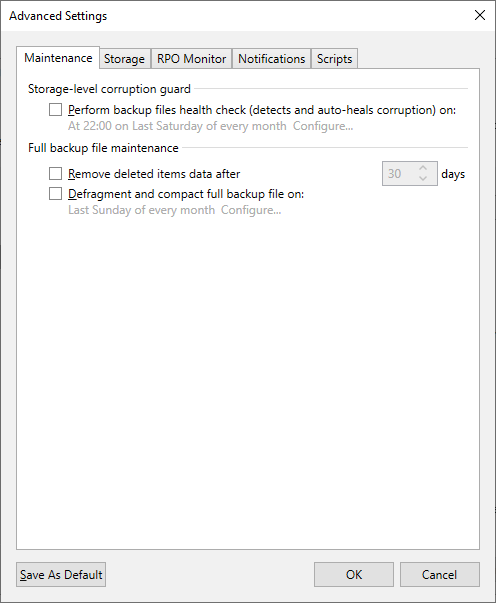

Advanced Settings

Below are the general recommendations for the Advanced Settings (Target > Advanced):

Maintenance Tab

- Perform backup files health check

- Disabled

While enabling this feature with dedupe storage is possible, it requires many read operations, which will take a very long time to complete. With larger backup files on deduplicated storage, this feature could cause a backup job to spend days performing the health check, causing the job to not create new restore points.

- Disabled

- Defragment and compact full backup file

- Disabled

There is no need for this option when Active Fulls are being created.

- Disabled

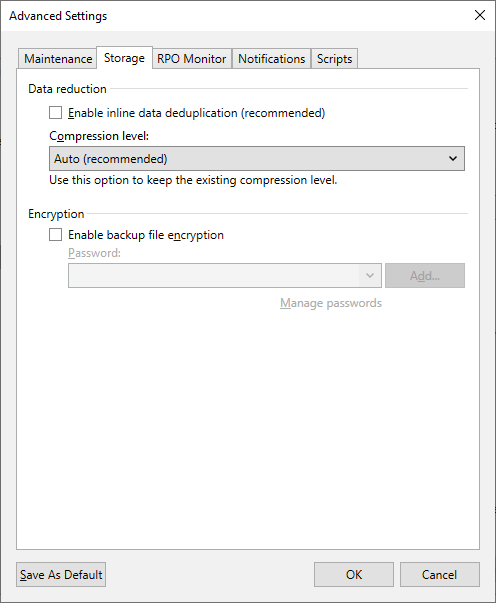

Storage Tab

- Inline data deduplication

- Disabled

Disabling deduplication in Veeam Backup & Replication will cause the restore points to appear larger, but because Veeam does not deduplicate them, it will allow the storage's deduplication function to be more effective.

Note: Storage that utilizes a non-dedupe zone for storing initially written files, such as ExaGrid's Landing Zone, may benefit from having Veeam's deduplication enabled to allow more restore points to be held in that rapid access state before being processed by the storage deduplication system.

- Disabled

- Compression level

- Auto

The Repository settings section of this article advises enabling a function that will cause all restore points to be decompressed before being written. As such, the compression set at the job level will only impact data compression while backup data is being transferred across the network.

- Auto

- Encryption

- Disabled

Data encryption has a negative effect on the deduplication ratio if you use a deduplicating storage appliance as a target. Veeam Backup & Replication uses different encryption keys for every job session. For this reason, encrypted data blocks sent to the deduplicating storage appliances appear as different though they may contain duplicate data. If you want to achieve a higher deduplication ratio, you can disable data encryption. If you still want to use encryption, you can enable the encryption feature on the deduplicating storage appliance itself.

- Disabled

Backup Repository Settings

All vendor-documented best practices and vendor-specific advice supersedes the advice below.

The generic advice below may differ from your storage vendor's best practices. It is critical that you follow the best practices provided by the storage vendor when possible.

The settings below are general advice for dedupe storage.

Storage Compatibility Settings

Below are the general recommendations for the Storage Compatibility Settings (Repository > Advanced...):

- Align backup file data blocks

- Enabled for dedupe storage that uses fixed block length deduplication or dedupe storage capable of block cloning.

- Disabled for dedupe storage that uses variable length deduplication.

- Decompress backup file data blocks before storing

- Enabled for all dedupe storage that does not have a non-dedupe landing zone.

Writing backup files to the dedupe storage that are uncompressed and undeduplicated by Veeam will allow the deduplication mechanisms within the storage to operate efficiently.

- Enabled for all dedupe storage that does not have a non-dedupe landing zone.

- This repository is backed by rotated drives

- Disabled

- Disabled

- Use per-machine backup files

- Enabled

If this KB article did not resolve your issue or you need further assistance with Veeam software, please create a Veeam Support Case.

To submit feedback regarding this article, please click this link: Send Article Feedback

To report a typo on this page, highlight the typo with your mouse and press CTRL + Enter.

Spelling error in text

Thank you!

Your feedback has been received and will be reviewed.

Oops! Something went wrong.

Please, try again later.

You have selected too large block!

Please try select less.

KB Feedback/Suggestion

This form is only for KB Feedback/Suggestions, if you need help with the software open a support case

Thank you!

Your feedback has been received and will be reviewed.

Oops! Something went wrong.

Please, try again later.